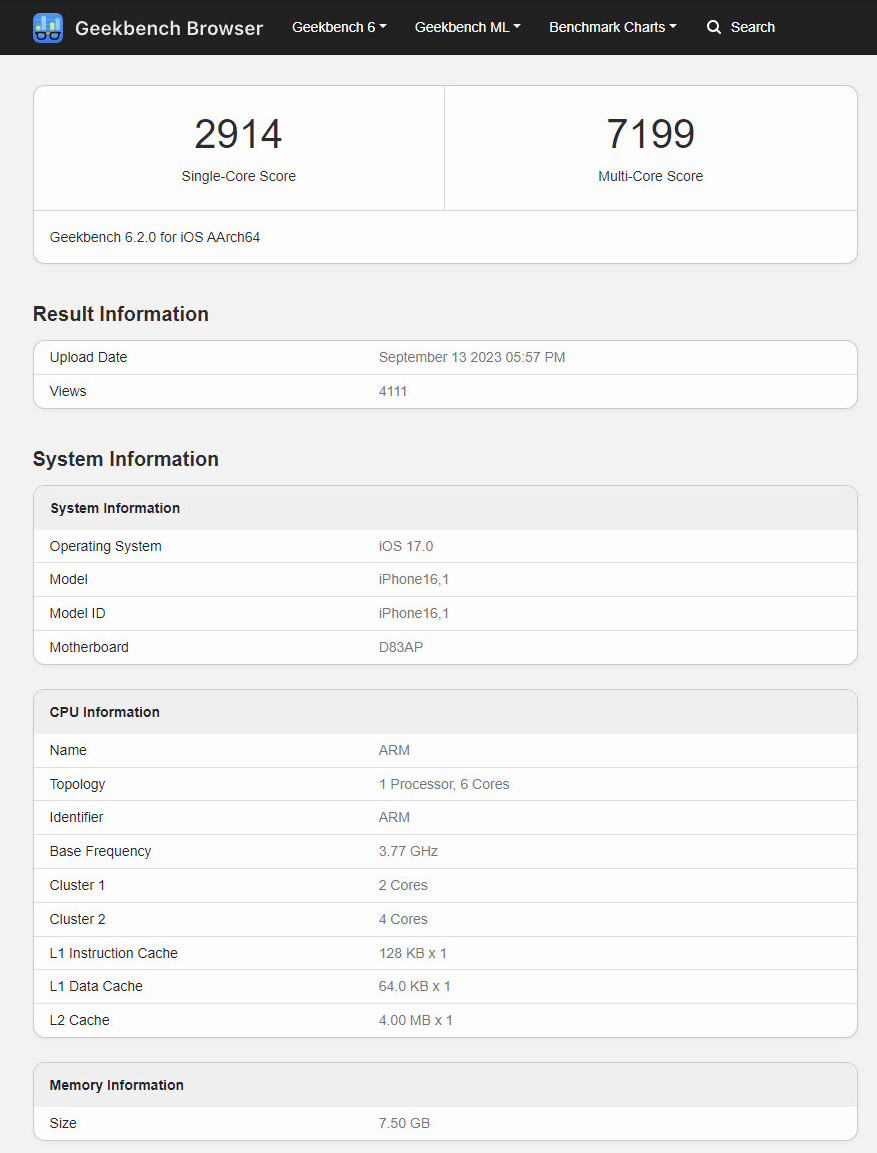

An Apple “iPhone16,1” was put through the Geekbench 6.2 gauntlet earlier this week—according to database info this pre-release sample was running a build of iOS 17.0 (currently in preview) and its logic board goes under the “D83AP” moniker. It is interesting to see a 16-series unit hitting the test phase only a day after the unveiling of Apple’s iPhone 15 Pro and Max models—the freshly benched candidate seems to house an A17 Pro SoC as well. The American tech giant has set lofty goals for said flagship chip, since it is “the industry’s first 3-nanometer chip. Continuing Apple’s leadership in smartphone silicon, A17 Pro brings improvements to the entire chip, including the biggest GPU redesign in Apple’s history. The new CPU is up to 10 percent faster with microarchitectural and design improvements, and the Neural Engine is now up to 2x faster.”

Geekbench is pretty useless for actual performance comparisons.

When I see someone mentioning geekbench multicore score, I just roll my eyes. Compare to other multicore benchmarks like Cinebench, Blender and most gaming bench they are way off in terms of real life performance. It looks like they bench for multicore for separate threads. You know one thread/job for one core and not one job for more than one core. This is great for mobile devices because this shared processing/tasks is a huge power draw so when it is possible using single core for processing/tasks is a huge power saver. I looks to me Geekbench always favored mobile devices and going to stay that way.

x86 has been the standard for waaayyyyyy too long

Others have come out but they never grab a foothold because you literally have to recompile everything. Some companies have even included their own compilers and optimizers but then you run into other packages and binaries that don’t work on anything but x86. One company literally wanted to give us a new 256-core system they were prototyping for a large scale web farm, but we ran into too many package issues that we couldn’t convert over to their architecture. And that was with Linux.

Yup. Some like DEC even offered on-the-fly binary recompilation from x86 to Alpha in windows, back when windows NT was available on 4 or 5 different processors (PowerPC, MIPS, Alpha, x86, and I think eventually Intel’s original x86 64-bit replacement.

x86 has evolved so much in the last 40 years that it’s still able to keep a foothold for PCs.

I’m curious what’s about to happen moving forward as they continue to increase transistor densities and shrink die sizes.

Apple succeeded at switching over to ARM though, they’re thriving.

They have more direct control over their software ecosystem though

Absolutely, an iron fist. But that worked out well in this case.

Yup, smart move for sure

They’ve already switched architectures twice before, so they’ve got some experience at it.

Apple definitely has a way of doing what is right sometimes, and forcing the industry’s hand to move forward.

… Sometimes. Sometimes this definitely backfires, but not this time.

hell, even intel tried to get away from x86 with itanium but failed miserably… and they screwed themselves again by recently dumping the RISC-V pathfinding a year after initiation. i worry about the future of Arc, but maybe they’ll pull their head out of their ass on that one if we’re lucky.

Funny thing is the 8086 was only supposed to be a stopgap. Their next big thing ended up being a miserable flop, but the 8086 took off and the rest is history.

Well, it’s one of those if it ain’t broke, don’t fix it things. Like QWERTY keyboard layouts.

The alternatives kind of need to support ACPI, or some similar standard

DeviceTree works for embedded devices, but it’s not great for end users who are trying to get interoperability between suppliers

Should just throw an x86 coprocessor slot on the motherboard, that way we can all embrace RISC-V (or arm or whatever the fuck)

How much has single core performance really changed in the past decade? You might as well be comparing a 2016 i5 at that point.

Not to mention the i9-13900K is made for multicore performance, has lower single core performance than it’s own alternate models.

7600K - 1481 ST 13600K - 2654 ST 13700K - 2820 ST 13900K - 2955 ST

So to answer the question something like an 80% improvement over 2016, roughly 2x if you compare it to a 2016 i5. And the 13900K does have the highest single thread performance in the 13th-gen models from what I can tell.

https://browser.geekbench.com/processors/intel-core-i5-7600k https://browser.geekbench.com/processors/intel-core-i5-13600k https://browser.geekbench.com/processors/intel-core-i7-13700k https://browser.geekbench.com/processors/intel-core-i9-13900k

Yeah, this just seems like marketing crap from Apple.