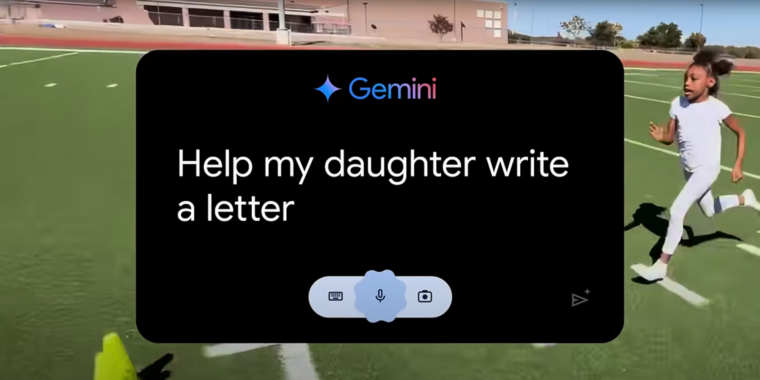

If you’ve watched any Olympics coverage this week, you’ve likely been confronted with an ad for Google’s Gemini AI called “Dear Sydney.” In it, a proud father seeks help writing a letter on behalf of his daughter, who is an aspiring runner and superfan of world-record-holding hurdler Sydney McLaughlin-Levrone.

“I’m pretty good with words, but this has to be just right,” the father intones before asking Gemini to “Help my daughter write a letter telling Sydney how inspiring she is…” Gemini dutifully responds with a draft letter in which the LLM tells the runner, on behalf of the daughter, that she wants to be “just like you.”

I think the most offensive thing about the ad is what it implies about the kinds of human tasks Google sees AI replacing. Rather than using LLMs to automate tedious busywork or difficult research questions, “Dear Sydney” presents a world where Gemini can help us offload a heartwarming shared moment of connection with our children.

Inserting Gemini into a child’s heartfelt request for parental help makes it seem like the parent in question is offloading their responsibilities to a computer in the coldest, most sterile way possible. More than that, it comes across as an attempt to avoid an opportunity to bond with a child over a shared interest in a creative way.

This is one of the weirdest of several weird things about the people who are marketing AI right now

I went to ChatGPT right now and one of the auto prompts it has is “Message to comfort a friend”

If I was in some sort of distress and someone sent me a comforting message and I later found out they had ChatGPT write the message for them I think I would abandon the friendship as a pointless endeavor

What world do these people live in where they’re like “I wish AI would write meaningful messages to my friends for me, so I didn’t have to”

The thing they’re trying to market is a lot of people genuinely don’t know what to say at certain times. Instead of replacing an emotional activity, its meant to be used when you literally can’t do it but need to.

Obviously that’s not the way it should go, but it is an actual problem they’re trying to talk to. I had a friend feel real down in high school because his parents didn’t attend an award ceremony, and I couldn’t help cause I just didn’t know what to say. AI could’ve hypothetically given me a rough draft or inspiration. Obviously I wouldn’t have just texted what the AI said, but it could’ve gotten me past the part I was stuck on.

In my experience, AI is shit at that anyway. 9 times out of 10 when I ask it anything even remotely deep it restates the problem like “I’m sorry to hear your parents couldn’t make it”. AI can’t really solve the problem google wants it to, and I’m honestly glad it can’t.

They’re trying to market emotion because emotion sells.

It’s also exactly what AI should be kept away from.

But ai also lies and hallucinates, so you can’t market it for writing work documents. That could get people fired.

Really though, I wonder if the marketing was already outsourced to the LLM?

Sadly, after working in Advertising for over 10 years, I know how dumb the art directors can be about messaging like this. It why I got out.

A lot of the times when you don’t know what to say, it’s not because you can’t find the right words, but the right words simply don’t exist. There’s nothing that captures your sorrow for the person.

Funny enough, the right thing to say is that you don’t know what to say. And just offer yourself to be there for them.

Yeah. If it had any empathy this would be a good task and a genuinely helpful thing. As it is, it’s going to produce nothing but pain and confusion and false hope if turned loose on this task.

The article makes a mention of the early part of the movie Her, where he’s writing a heartfelt, personal card that turns out to be his job, writing from one stranger to another. That reference was exactly on target: I think most of us thought outsourcing such a thing was a completely bizarre idea, and it is. It’s maybe even worse if you’re not even outsourcing to someone with emotions but to an AI.

You’re in luck, you can subscribe to an AI friend instead. /s

You’ve seen porn addiction yes, but have you seen AI boyfriend emotional attachment addiction?

Guaranteed to ruin your life! Act now.

Don’t date robots!

Brought to you by the Space Pope.

Shut up! You don’t understand what me and Marilyn Monrobot have together!

Those AI dating sites always have the creepiest uncanny valley profile photos. Its fun to scroll them sometimes.

Uhh “subscribing to an AI friend” is technically possible in the form of character.ai sub. Not that I recommend it but in this day your statement is not sarcastic.

These seem like people who treat relationships like a game or an obligation instead of really wanting to know the person.

it’s completely tone-deaf of that’s their understanding of humanity. Similar to the ipad app ad that theluddite blog refered to here in his post about capture platforms. Making art, music and human emotional connections are not the tedious part that need to be automated away ffs

My initial response is the same as yours, but I wonder… If the intent was to comfort you and the effect was to comfort you, wasn’t the message effective? How is it different from using a cell phone to get a reminder about a friend’s birthday rather than memorizing when the birthday is?

One problem that both the AI message and the birthday reminder have is that they don’t require much effort. People apparently appreciate having effort expended on their behalf even if it doesn’t create any useful result. This is why I’m currently making a two-hour round trip to bring a birthday cake to my friend instead of simply telling her to pick the one she wants, have it delivered, and bill me. (She has covid so we can’t celebrate together.) I did make the mistake of telling my friend that I had a reminder in my phone for this, so now she knows I didn’t expend the effort to memorize the date.

Another problem that only the AI message has is that it doesn’t contain information that the receiver wants to know, which is the specific mental state of the sender rather than just the presence of an intent to comfort. Presumably if the receiver wanted a message from an AI, she would have asked the AI for it herself.

Anyway, those are my Asperger’s musings. The next time a friend needs comforting, I will tell her “I wish you well. Ask an AI for inspirational messages appropriate for these circumstances.”

I don’t think the recipient wants to know the specific mental state of the sender. Presumably, the person is already dealing with a lot, and it’s unlikely they’re spending much time wondering what friends not going through it are thinking about. Grief and stress tend to be kind of self-centering that way.

The intent to comfort is the important part. That’s why the suggestion of “I don’t know what to say, but I’m here for you” can actually be an effective thing to say in these situations.

Don’t need to ask an AI when every website is AI-generated blogspam these days